The fascinating story of the exponential function

From integers to matrix exponents

The way you think about the exponential function is (probably) wrong.

Don't think so? I'll convince you. Did you realize that multiplying e by itself π times doesn't make sense?

Here is what's really behind the most important function of all time.

The exponential

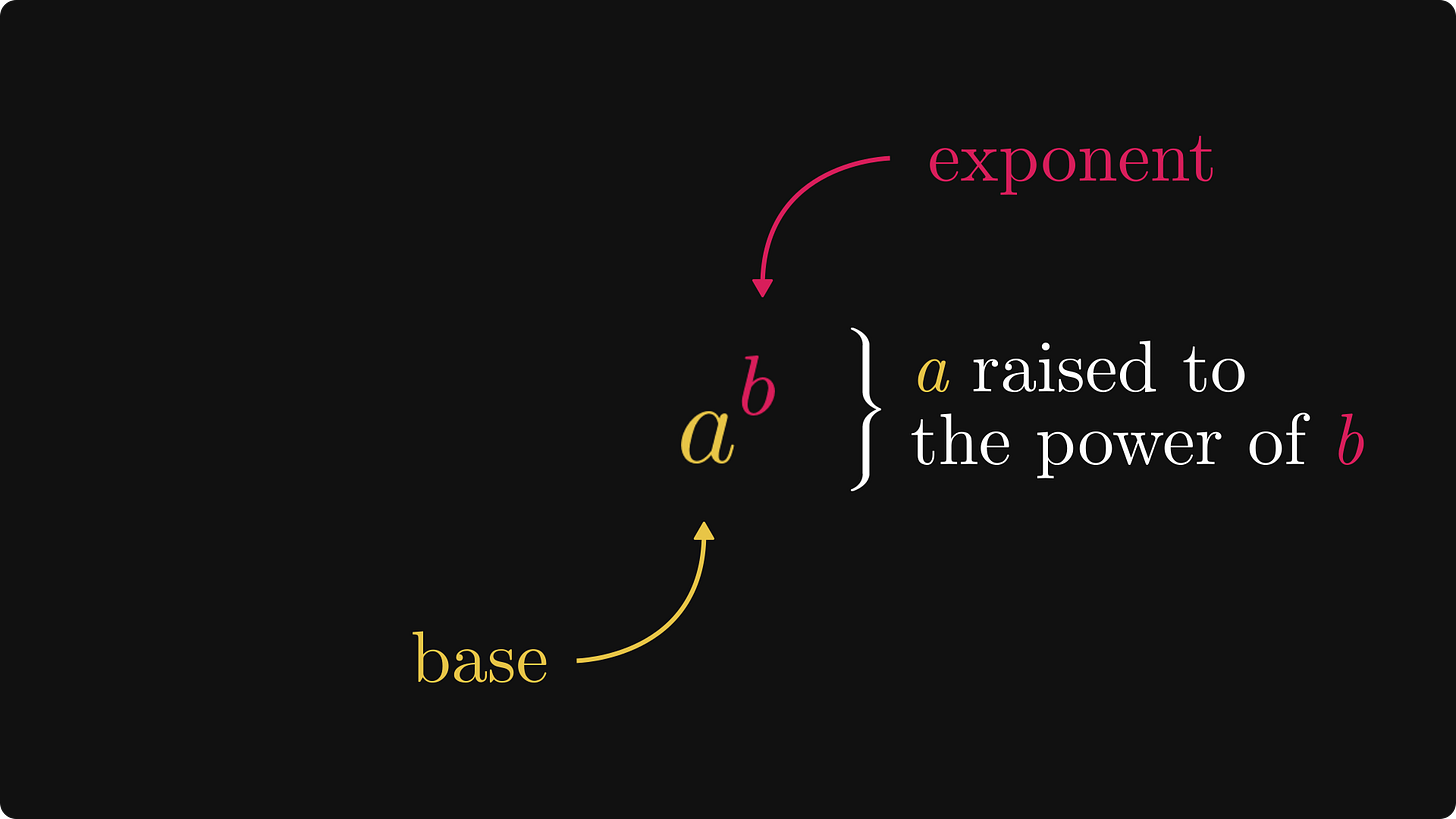

First things first: terminologies. The expression aᵇ is read "a raised to the power of b." (Or a to the b in short.)

The number a is called the base, and b is called the exponent.

Let's start with the basics: positive integer exponents. By definition, aⁿ is the repeated multiplication of a by itself n times. Sounds simple enough.

But how can we define exponentials for, say, negative integer exponents? We'll get there soon.

For that, two special rules will be our guiding lights. First, exponentiation turns addition into multiplication. We'll call this the "product of powers" rule.

Second, the repeated application of exponentiation is, again, exponentiation. We'll call this the "power of powers" rule.

These two identities form the essence of the exponential function.

To extend the definition to arbitrary powers, we must ensure that these properties remain true.

So, what about, say, zero exponents? Here, the original interpretation (i.e., repeated multiplication) breaks down immediately. How do you multiply a number with itself zero times?

To find the definition, we’ll use wishful thinking.

I am not kidding. Wishful thinking is a well-known and extremely powerful technique. The gist is to assume that powers with zero exponents are well-defined, then use some algebra to find out what the definition might be.

In this case, the "product of powers" property gives the answer: any number raised to the power of zero should equal to 1.

What about negative integers? We cannot repeat multiplication zero times, let alone negative times.

Again, let's use wishful thinking and pretend that there is a definition somewhere. If powers with negative integer exponents are indeed defined, the "product of powers" tells us what they must be.

After all, the algebraic rules for negative exponents must follow the rules for positive integer exponents. This is called the principle of permanence.

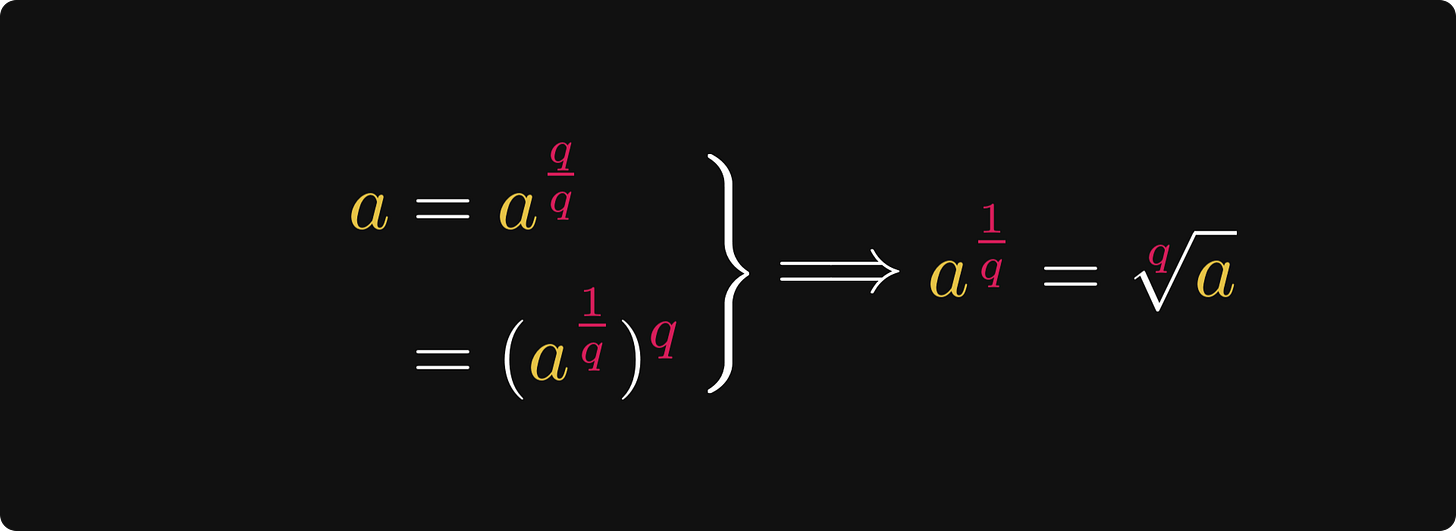

What about rational exponents? You guessed right. Wishful thinking!

The "power of powers" rule yields that it is enough to look at exponents where the numerator is 1.

The same rule gives that rational exponents with numerator 1 must be defined in terms of roots.

Thus, we finally see how to make sense of rational exponents.

Now, what about arbitrary real numbers? Buckle up. We are about to floor the gas pedal.

So far, we have defined the exponential function for all rationals. Let's use this to our advantage! Real numbers are weird. Fortunately, they have an exceptionally pleasant property: they can be approximated by rational numbers with arbitrary precision.

This is because no matter how close we get to a real number, we can find a rational number there.

When the approximating sequence is close to the actual exponent x, the powers are also close. Closer and closer as n grows.

Thus, we can define exponentials for arbitrary real exponents by simply taking the limit of the approximations. And we are done!

If you are curious, this is how the exponential function looks like for various bases.

Is this all about the exponential function?

Surprisingly, we can raise the base to a matrix power!

Matrix exponentials

Let’s focus on a special exponential function: the one with Euler’s number as its base. When we generally refer to the exponential function, this is the one we are talking about. (From now on, we’ll exclusively talk about this one.)

Why is this so special? Because it is its own derivative!

Here is the thing: you can plug in matrices into the exponential. At first thought, this makes even less sense than multiplying e by itself π times.

How can we do this?

With the help of an extremely powerful mathematical tool: the Taylor series.

Taylor series

Let’s talk about differentiation first. (It is not an accident that we were talking about the fact that the exponential function is its own derivative.)

What is differentiation? By definition, the derivative of the function f(x) at the point a is the limit of the difference quotients around a.

However, we only get the full picture from a geometric perspective. This viewpoint reveals that the derivative is simply the slope of the tangent line.

This tangent line can be described with a simple linear equation.

If you look at this function graph, you can see that close to a the tangent line approximates f(x) pretty well.

As it turns out, this is the best possible local linear approximation.

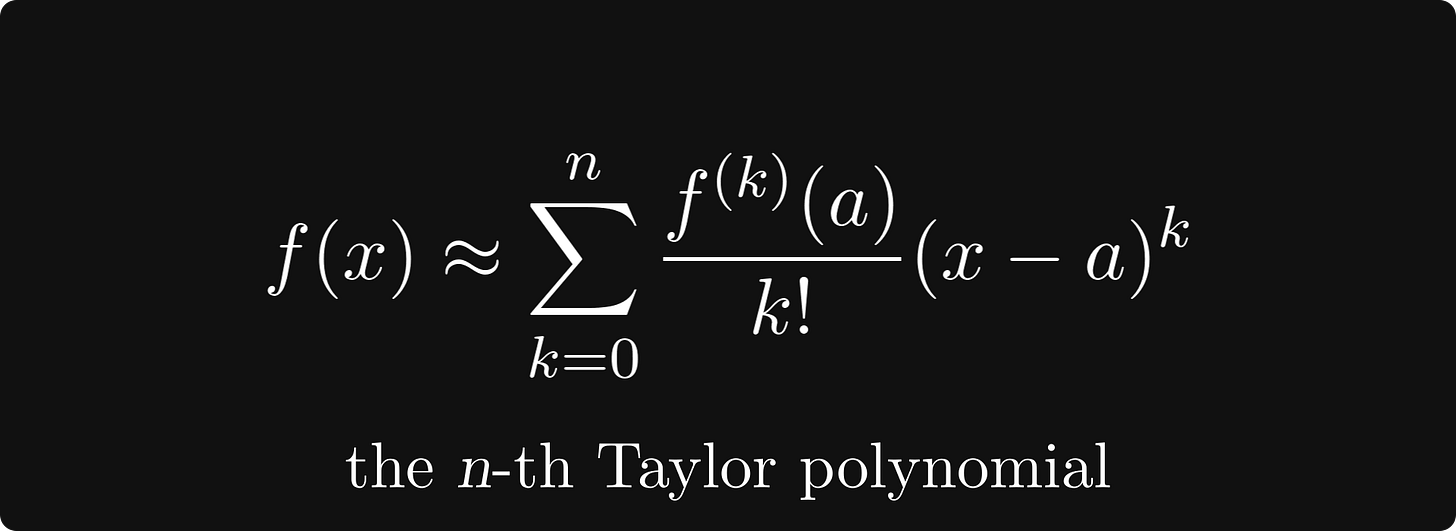

What if a linear approximation is not good enough? We can use even higher-degree polynomials. Surprisingly, the best-approximating polynomials are given by the higher derivatives!

These are called Taylor polynomials, and they play an essential role throughout mathematics. Their importance cannot be emphasized enough.

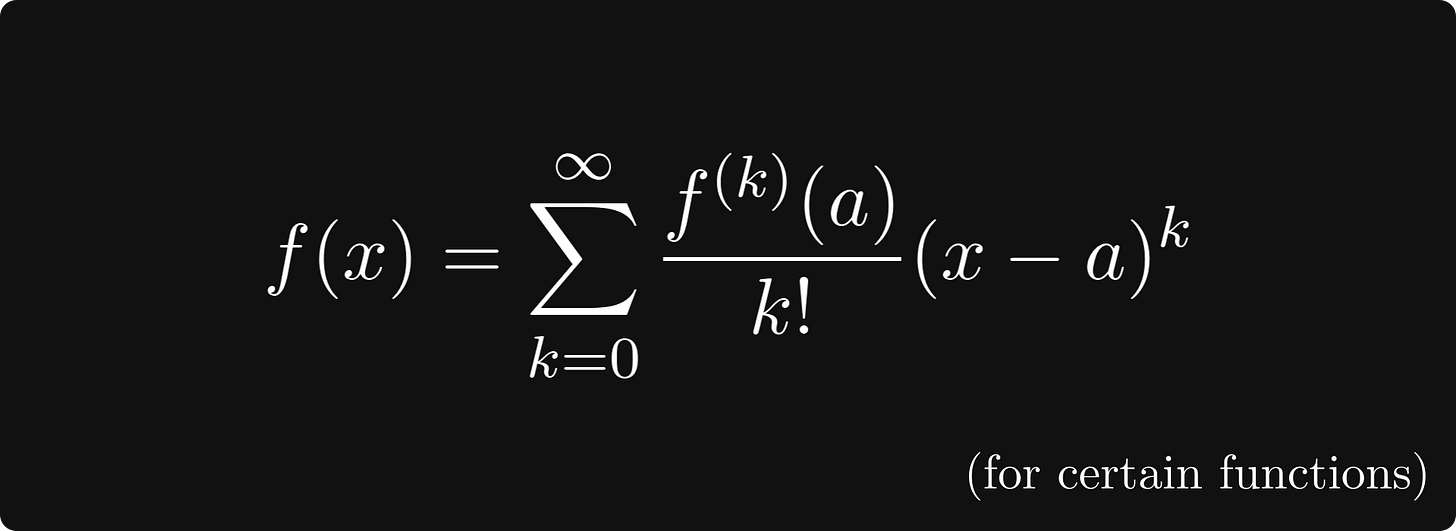

What’s best about this is that for certain functions, we can take n to infinity! This is called the Taylor series of f(x).

Awesome, but how is this relevant to us? Let’s see.

Matrix exponentials and Taylor series

As mentioned earlier, the exponential function is its own derivative. Thus, its Taylor series at a = 0 is simple to obtain.

Check out this expression: the variable x is raised to an integer power, multiplied with a scalar, then summed over k. (By the way, the Taylor series can be even used to define the exponential function.)

Can we perform these operations with matrices? Hell yeah! We can define the matrix exponential by plugging in a matrix to the Taylor series.

That’s the mystery of matrix exponentials.

It’s not that simple though, I skimmed a ton of details. For instance, infinite sums might not exist. Determining whether or not a given series converges or not can be a hard question, let alone to find a closed-form expression for the sum. For matrix inputs, it is even more difficult.

However, that is a topic for another day :)

Conclusion

The exponential function is one of the most important one in mathematics, appearing in thousands of applications, ranging from dynamical systems to machine learning.

Despite that, we hardly ever think about its depths; we don’t ponder on questions like how to multiply e by itself π times.

Understanding the mysteries of the exponential function teaches us about mathematics itself. If we look behind the curtain, we can learn key techniques like wishful thinking and the principle of permanence.

In math, patterns and structures are often much more important than models. If you take home one message from this post, let it be this.

This is great but I came here after clicking an article on LinkedIn which talked about exp(pi). There is no mention of that in this article, e.g. why it is so important.

Nice Explanation. We can define a^x as e^{x ln a}.